Lately, I’ve been feeling a lack of a well-deliberated, explicit moral code. The world is changing really fast – we have Elon Musk trying to set up a human colony on Mars while Earth’s bio-ecosystem is degrading by the day. So, should I support the investment of resources into making Mars habitable while Earth is gradually becoming unhabitable?

This, obviously, isn’t the only question. Every day, I feel like I need to decide which way to swing on controversial topics. People have strong opinions about things like genetically engineered babies, bitcoin, nuclear power and other new technologies. I know enough about cognitive biases to know that I shouldn’t trust my gut fully on these questions. My gut simply doesn’t know enough to have a good opinion on complex societal issues. Instead of relying on my gut, I need to rely on deliberate thinking to make moral choices.

Everyone should have their moral code written

First, a meta-point: why do we need a moral code? As I said, it’s because as the world becomes complex, what’s right and what’s wrong also becomes complex. You simply can’t intuit your way to having an opinion on questions like whether money printing by governments is good, or whether Universal Basic Income is morally wrong.

Why do you need to have moral opinions? You can refrain from having moral opinions on many issues, but every now and then you come across a situation whether you need to decide what to do. For example, if you get a lucrative job at a cigarette company, would you take it?

Obviously, there’s no objectively right or wrong answer to moral questions and that’s precisely why nobody else can help answer this for you. If you don’t have an explicit moral code, you’ll go with your mood which can swing either way – a) take the job, money is good and people smoke by their own choice; b) don’t take the job, you can make money somewhere else that doesn’t involve being complicit in killing millions every year.

What follows is the moral code I’ve come up with for myself. It represents my current way of thinking and I’m sure as my thinking and life experiences evolve, this will evolve too.

It may do you good to sit down and write something for yourself to help you guide in life. There’s no universally correct morality, so yours can be totally different from mine.

Tapping into moral intuition

The first thing I did was to reflect upon how my gut makes me behave by default. This moral gut-feeling could serve as a great starting point to question, extend and refine into a more well-thought-out moral code.

So how do I behave intuitively?

I avoid doing things that have obvious and immediate harm to someone.

For example, I quit eating meat because I know it obviously kills an animal that can feel pain. And I’ve never been okay with someone suffering because of my actions.

But I do not go out of my way to do a cost-benefit analysis of my actions where cost or harm directly attributable to me is hard to calculate due to the non-uniqueness (fungibility) of my actions.

For example, I switch on the AC or travel in an airplane without guilt even though I know carbon emissions are heating the planet. This is because I feel my actions there are fungible. If I don’t switch on the AC, someone else will. You may think that this can justify any action, but that isn’t the case. Killing an animal or stealing from someone is an obvious harm that wouldn’t happen if the doer refrains from doing it.

Where there’s collective harm, my actions are replaceable and if I get an obvious benefit (such as a comfortably cool room or comfortable travel by air), I’m okay with my choices (even though simultaneously I wish we figure out a way to minimize the collective harm).

I do not feel compelled to sacrifice my interests for anyone else apart from people in my close circle (family, friends, and colleagues).

In other words, even though I admire Mother Theresa, I know I’m not like her. I do not resist helping others, but I am not driven by it. My drive in life has more to do with learning and curiosity than with helping others. This may come across as repulsive, but this is just an honest appraisal of how I’m driven. Helping others is obviously a good thing, but I’m not actively driven by this impulse.

Systematizing intuition into a moral code

It seems like my intuition on moral rights and wrongs can be summed up in two principles:

- If my actions cause identifiable suffering to someone, don’t do it (even if it brings me pleasure)

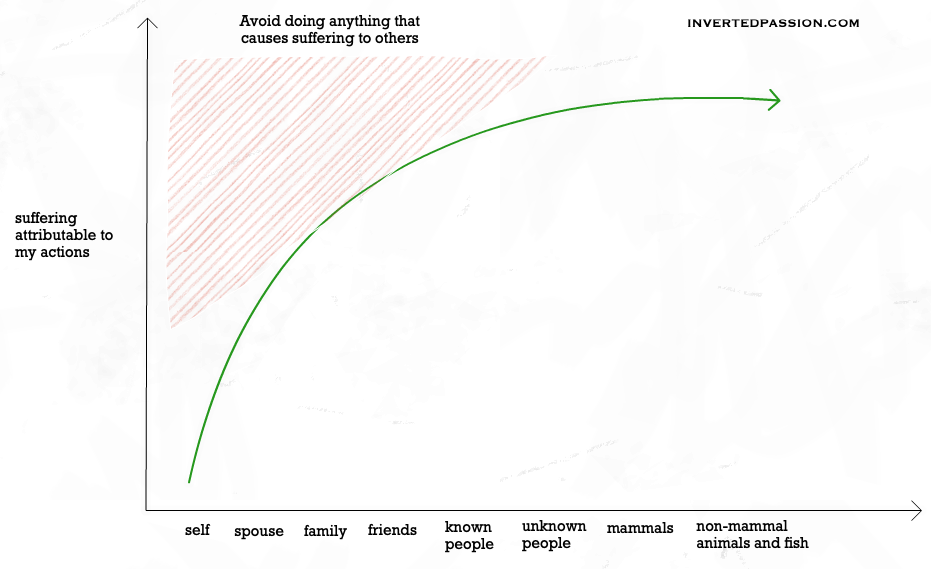

- The closer someone is to me, the lower the threshold for such suffering I ought to have

Here’s roughly what I mean:

In nutshell, my first priority is to ensure my actions do not cause direct suffering to anyone, even if there are personal benefits that I may get from such actions.

What I mean by this is that: my pleasure (which is often temporary and fleeting) isn’t worth the suffering of someone else. Pain and suffering hold a much higher moral priority than pleasure and happiness.

Recently, I tweeted about this asymmetry between pain and pleasure. They’re not simply a flip of each other, they’re fundamentally different entities.

This is a view inspired by negative utilitarianism, which I first came across via David Pearce. My podcast interview with him made me go deeper and try to sort out where I stand in terms of morality.

Moral calculus: solving the Trolley Problem

Real-world is rarely as easy as who’s getting the harm and who is getting the benefit. Tradeoffs exist everywhere: often we need to choose between actions that lead to different consequences. The famous moral dilemma the Trolley Problem rears its head in different guises.

Even though a decision as grave as the trolley problem is unlikely to arise, similar decisions are required to be made on a regular basis. For example, am I okay with the use of animals for beauty products testing? What about the use of animals for clinical research? Similarly, should I be supporting nuclear power where it clearly has its pros and cons?

I need a framework to decide where I land when it comes to choices that entail tradeoffs (which is actually almost everything).

For my moral calculus, I’m inclined to adopt a flavor of negative utilitarianism.

Define suffering as a state of mind which a person will willingly accept the elimination or cessation of.

Take decisions such that your actions minimize the total amount of suffering in the world (weighing high intensity of suffering a lot more than the low intensity of suffering).

Disregard pleasure and happiness in the calculation as reduction of suffering is of higher moral importance (and often more permanent) than increasing pleasure (which is often fleeting).

This principle helps solve the Trolley Problem for me: if I’m ever put in such a position to decide between the killing of 1 unknown person to prevent the killing 5 unknown people, I’d be okay to make that decision.

However, since the high intensity of suffering has a higher priority, I wouldn’t be OK with the slow roasting of 1 person to avoid causing painless death for 5 people.

Expanding my moral calculus

There are a couple of more nuances I want to introduce in my moral calculus.

- Fungibility v/s non-fungibility of my actions

- Certainty of consequences v/s uncertainity

- Doing something v/s not doing something

- Optimality v/s sub-optimality of actions

Fungibility of actions

Certain actions are non-fungible. That is, they wouldn’t happen if you don’t do it. For example, stealing money from someone is non-fungible. If you don’t steal, it’s highly unlikely that the thing would get stolen.

Absolutely avoid non-fungible actions that cause suffering.

Other actions are fungible in the sense that if you don’t do them, someone else will do a very similar action. E.g. carbon emissions related to switching on the AC or purchasing the bitcoin.

If your actions are fungible, weigh down your contribution to potential suffering.

Certain v/s uncertain suffering

In some cases, you know for certain that your actions caused certain harm. For example, eating meat involves killing which definitely would have harmed a living being.

Many people talk about the fungibility of eating meat. Their reasoning goes something like this: “if I don’t buy the chicken, someone else will. The chicken has died already, so I might as well buy it“. I agree with them that eating meat is fungible, but I still reject it because it has caused certain suffering: I know the chicken would have suffered.

On the other hand, in other cases, you’re not sure how much harm will your actions cause. For example, the harm of climate change is much more diffuse and uncertain. We know it is likely bad but don’t know how bad and for whom.

As a thumb rule, weigh certain suffering significantly more than uncertain suffering.

Doing something v/s not doing something

Some people argue that not doing something is also a choice and hence should count in the moral framework. They would say that if you’re not preventing evil in the world, you’ve co-opted into evil.

I disagree with that.

Right now, there is an infinite number of choices you’re NOT making. You cannot be possibly held accountable for all those choices. Even deciding whether your inaction is net morally positive or negative is impossible because infinities are impossible to calculate.

Hence, I adopt the principle of only judging the actions and decisions actively taken.

Optimality v/s sub-optimality of actions

Suppose a theoretically perfect sequence of actions exists which will minimize the net suffering. Let’s call it the optimal action.

For example, you’re keen to minimize your total carbon footprint and you’re deliberating how to best plan your travel to do so. You have several options:

- Travel by foot across cities

- Take an airplane ride

- Quit your job and invent an electric plane that runs on solar power

- Don’t do anything until you figure out the absolute best way to minimize your carbon footprint. (Perhaps there’s even a better way than electric planes)

Which option will you take? It’s obvious to see that one cannot be expected to perfectly minimize negative consequences. As one lives, taking decisions and actions becomes necessary. And those decisions will likely be always sub-optimal.

Hence, I adopt the case for pragmatism. That is, even though priority should be towards minimizing total suffering in the world, given the resources + skills you have and opportunities available in the world around you, do your best. Prioritize reduction of suffering but be pragmatic about it.

Summing up

So, whenever a decision needs to be made, I’ll prefer the option that reduces net suffering in the world where each option’s value is roughly evaluated as:

Suffering reduction value of an action = intensity of suffering reduced by the action * closeness of those who’re suffering to me * certainty of suffering reduction by the action * degree of fungibility of my actions * number of impacted conscious beings

Whichever action seems like minimizing the sum of these suffering contributions is the one which I’ll feel right about.

Obviously, the choices cannot be calculated perfectly. It’s not mathematics. So when I encounter a decision to make, I expect to roughly follow the following algorithm:

- How fungible is my action?

- If it’s fungible, rest doesn’t matter much.

- Who is likely to see a reduction in suffering because of my decision and who is likely to see an increase?

- What’s the number of conscious beings who’ll see their suffering impacted?

- How certain am I about the different types of suffering involved?

- Generate additional options for actions, if I can

- But be pragmatic about it.

- Use the resources and opportunities I have and do my best.

- From the available options, pick an action that minimizes net suffering

- Weigh beings closer to me higher

- Weigh highly intense sufferings higher

Moral code as a guide to life

Notice that I’m not passively choosing between the options presented to me, but I’m actively generating additional options.

For example, rather than just choosing between not eating meat v/s eating meat, can I also bring an additional option of eating lab-cultured meat? Similarly, for the Trolley problem, rather than choosing one person dying v/s five people dying, can I try to find a way to stop or derail the Trolley?

As a rationalist, because moral code feels like mathematics, it’s easy to beat oneself up for finding perfect answers. But that’s misguided because all the inputs in the equation/algorithm are fuzzy as well.

Hence I want to reinforce the pragmatic use of one’s moral code. Let it be a guide for your life but don’t get trapped by living a perfectly moral life. That’ll never happen because moral codes themselves are ambiguous at the extremes (since words themselves are ill-defined at the extremes).

As long as you remember that moral codes are not mathematical theorems, you’ll reap all the benefits they provide, of which leading a life with confidence is the most prominent one.

Thanks Aakanksha Gaur for reviewing the draft.

Join 200k followers

Follow @paraschopra

Get new essays on your email: