Recently finished “The Spike: An Epic Journey Through The Brain in 2.1 Seconds” by Mark Humphries and here are my notes from it.

1/ As the book’s subtitle suggests, it’s about the neural code our brain uses for doing what it does.

The book is rich with details and I learned a lot of new facts and ideas about the brain. I highly recommend the book to anyone who has an interest in neuroscience.

2/ Since writing about an object as complex as the brain can fill encyclopedias, I will focus my notes on what I know now that I didn’t know before reading the book.

3/ Quick background first: the adult human brain has about 86 billion neurons. 1/4th of those are in the cortex (cerebrum) while 3/4th of them are in the cerebellum.

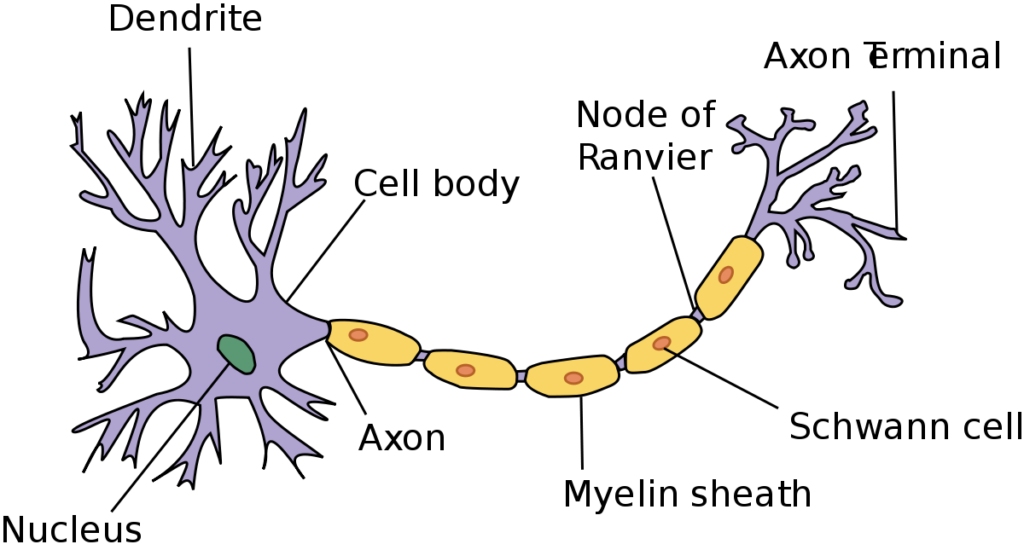

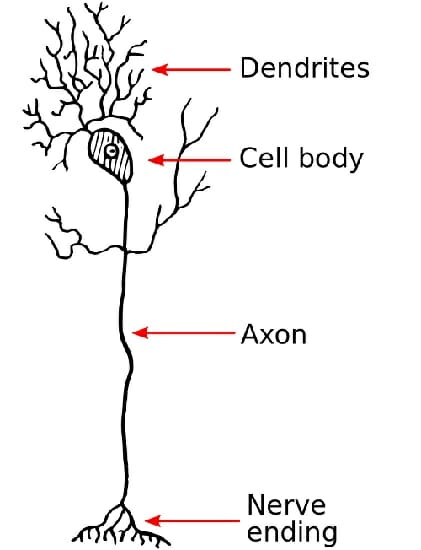

4/ Each neuron on average connects to ~7500 other neurons. This was new and shocking to me because most illustrations of the neuron make you believe that neurons perhaps have a maximum of 10 connections.

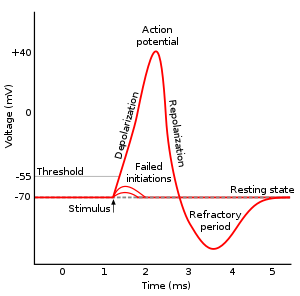

5/ Neurons communicate with each other by sending electric spikes (called an action potential) along their body (via elongated part known as an axon). These spikes (in most animals) are all-or-nothing phenomena, and each neuron has its own characteristic spike (based on the number of ion channels on its membrane, axon width, and so on)

6/ Some primitive animals and early layers of our visual system don’t use spikes. They communicate via simple diffusion of voltage (by diffusion of ions). This was new to me as I thought all neurons communicate via action potential.

7/ There are two types of neurons: excitatory and inhibitory. Roughly 90% of connections to a neuron are excitatory while the other 10% are inhibitory. But, the inhibitory connections are stronger which ensures that for any neuron excitation and inhibition are kept in equal balance.

8/ One incoming spike at a neuron is often not enough to generate an action potential (or inhibit an action potential that’s about to happen). It requires hundreds of excess spikes (above the background inhibitory or excitatory spikes) arriving together to change the spiking behavior of the neuron.

9/ A neuron’s spike can have different levels of impact for each of the thousands of neurons it connects to. This connection strength is modulated by the number of receptors at the downstream neuron and the number of neurotransmitters released by the upstream neuron.

10/ Our brain’s activity never ceases (except when we die), so the neurons inside the brain are kept “at the edge of firing” with these equal excitatory and inhibitory activations from the incoming thousands of connections from other neurons.

11/ Neurons typically don’t fire deterministically. For the same input, sometimes they’d fire, sometimes they won’t. This randomness is due to the razor’s edge balance between randomly arriving excitatory/inhibitory spikes along with the inherent randomness of biology (sometimes channels open, sometimes they don’t).

12/ The failure to produce a spike gives a neuron several advantages: a) it helps in dynamically adjusting the “reliability” of input from a neuron (thus spiking only when an incoming neuron is firing at a high rate by requiring successive spikes for action potential to happen); b) random failures help “overfitting” in the network and hence help in generalization.

13/ Because a neuron doesn’t fire deterministically, to know what a neuron is coding, we have to look beyond just one activation spike. We have to see patterns in spikes.

The two main camps of neural coding are spike rate coding and spike time coding.

14/ In spike rate coding, a neuron changes its firing rate (from its long-term average baseline) to encode a particular variable. For example, a neuron in the visual cortex might increase its firing upon seeing left to right moving figures while decreasing it for the right to left.

15/ In spike time coding, a neuron encodes information in the time delay between two successive spikes. For example, there might be a neuron that uses this delay to communicate the angle from the center at which a sound is coming from.

16/ Brain uses both types of codings (+ supposedly more undiscovered ones) in different neural circuits for different functions. Neurons near the sensory organs rely more on timing while the cortex neurons that do computation rely more on spike rates.

17/ How often do neurons spike? One surprising recent discovery has been that while on average neurons fire at 1 Hz frequency, most neurons don’t spike at all. This implies two things: a) most spikes in the brain are produced by a small percentage of neurons that spike at >10hz; b) most neurons are “dark neurons” – they don’t spike at all.

18/ These dark neurons were a surprise for me but it makes sense. When we put electrodes into a brain to measure activity, we’re automatically biasing towards measuring active neurons. How will we ever detect neurons that don’t spike? Only recently modern methods have revealed that most neurons are silent most of the time.

19/ What are these dark neurons for? Nobody knows but neuroscientists guess that either they may be super-specialized (fire only when you see Angelina Jolie), or they may represent spare capacity for learning new concepts (to be used when the brain needs to form new circuits).

20/ How do neurons know who to connect to? At first, when we’re born, the wiring is random. After that, we don’t know the learning algorithm used by the brain but we do know that two neurons that have similar spike activity tend to be connected more often than chance. This is perhaps because the probability of communicating whatever a spike is representing increases if similar spikes get recruited to fire together.

This is why: “neurons that fire together, wire together”. Although the mechanism for this is still a bit of a mystry.

21/ Neurons aren’t passive units of computation but active agents: they grow their dendrites to seek inputs, increase or reduce their ion channels at different places to change their long-term behavior, elongate their axons, modulate the strength of their connections, and so on.

In fact, each neuron should be modeled as an agent with goals.

22/ The book also goes into a bit of depth on dendrites. Neurons were historically assumed to be a fundamental unit of computation that integrated spikes and fired when its electric potential increased beyond a threshold. But today we know that dendrites are also doing computation.

23/ Individual dendrites have spikes of their own, which may or may not activate the neuron’s action potential. All this suggests that even a single neuron is perhaps is doing sophisticated computations at its own level and can be modeled as a multi-layer artificial neural network.

24/ The brain doesn’t need any “external” input for generating its activity. An individual neuron can spike without any input (biology is messy and many things can happen that generate such a spontaneous spike). Such spontaneous spikes can then reverberate in the network through all sorts of feedback loops.

This is why you can culture neurons in a dish and gradually see them settle into some activity dynamics of their own. What are these petri-dish brains dreaming of? Nobody knows!

25/ How are we able to have sub-second reactions when there are so many connections and loops in the brain? Wouldn’t decision making in the complex circuits of the brain take forever? No, information doesn’t simply flow from senses to brain followed by motor actions. Our brain, instead, is actively predicting what’s going to happen next and what senses communicate is the error between the brain’s prediction and outside reality.

26/ So, if a ball is coming towards you, instead of waiting for signals about its successive locations, the brain is predicting its trajectory and already generating the signals that the eye is supposed to generate. This way the brain gets a headstart and is able to use predicted information for deciding what to do next.

27/ I think it is pretty incredible that the spikes generated by our incoming sensory data are indistinguishable from spikes generated by the brain’s predictions. Internally, these two are treated similarly.

Perhaps this is why we can’t tell if we’re dreaming or awake.

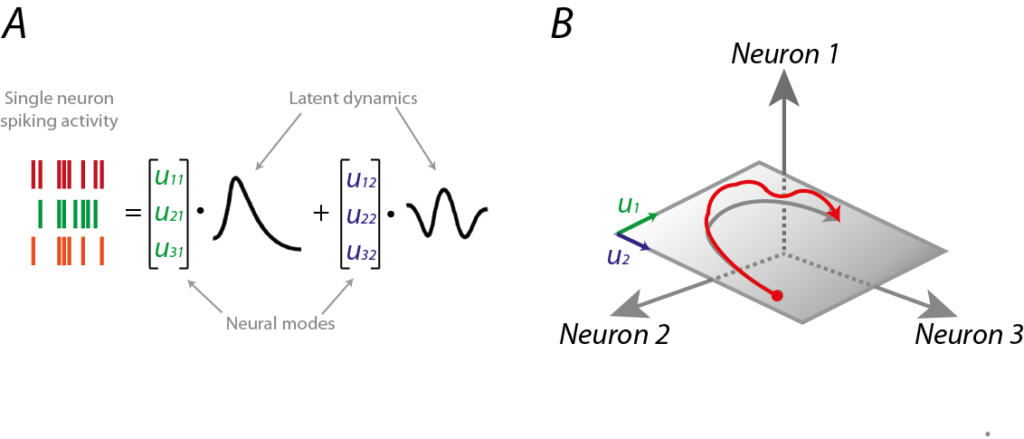

28/ For many tasks in the brain, a single neuron’s firing may not mean anything. Rather, it may be the collective encoding of a population of neurons that may encode the representation of the task. This idea is called population encoding and it may enable few neurons to encode a lot of rich information.

29/ One benefit of population coding is that it allows individual neurons to be non-deterministic while collectively (through feedback loops) enabling the network to encode stable information. (e.g. if one neuron doesn’t fire, the circuit can have another connected neuron fire).

30/ The author cautions against reading too much into information encoded into a neuron. It may be possible that we decode some information from a neuron’s or a circuit’s activity and yet it may not be involved in the processing of that information. For example, if we can decode whether the organism just ate from a neuron’s activity, it may be because the neuron in question represents the organism’s internal body clock (which is correlated to hunger but not caused by it).

31/ For long-term memory storage, the hippocampus part of the brain seems to be important. But memories are not stored in the hippocampus. Rather, it seems to hold them for the short term and then distributes long-term storage of memory across the entire brain.

32/ Visual memories are stored in visual areas, auditory in auditory regions, and so on. This is perhaps why recalling the memory evokes feelings similar to the original experience. Memory and sensory information are the same information (perhaps at different levels of fidelity).

33/ The most incredible thing to me is that despite the discontinuous and erratic information flow in the brain, our conscious experience is continuous and virtually “error-free”. We don’t notice any abruptness and “missing holes” in the experienced world while the underlying biology of the brain is both faulty and laggy. If this isn’t magic, I don’t know what is!

34/ Our intuitions from digital computers break down for the brain. As it must have been evident by now, the brain is nothing like the computer on which you’re reading this. The most obvious difference is the parallel nature of the brain (v/s sequential nature of a digital computer) + the level of redundancy that the brain exhibits (you take out a small piece and the person with the brain keeps on living normally).

35/ The book has a phrase that I loved: “ask not what a neuron sends, but what it receives“. I think it nicely reinforces that the magic of the brain lies in the network and not in any individual neuron.

Join 200k followers

Follow @paraschopra